This guide sets up Venice AI Bitcoin accounting with Opencode Desktop and Clams. Venice AI is the private model provider, Opencode is the agent shell, Clams is the accounting engine. Venice routes prompts through its own private infrastructure with no logging, no training on your data, and no third-party sharing. The result is a natural-language interface to your books where the model never sees plaintext outside Venice's hosted inference, and every wallet connection, cost basis calculation, and report stays on your machine.

The Stack

- Clams CLI: the accounting engine. Connects wallets, tracks cost basis, generates reports.

- Clams Agent Skills: teaches the model every Clams command, workflow, and prerequisite.

- Venice AI: private model provider. No prompt logging, no training on user data, choice of open-weight models.

- Opencode Desktop: open-source AI agent. Connects to any OpenAI-compatible endpoint, including Venice.

Prerequisites

Before doing anything in Venice or Opencode, get Clams installed and verified. Without the CLI in place, the agent has nothing to drive.

The commands below run in your terminal. On macOS that's the Terminal app (open Spotlight with Cmd+Space and search "Terminal"). On Linux, any terminal emulator works. On Windows, use WSL or Git Bash. Paste one command at a time and press enter.

Install the Clams CLI:

curl -sSL https://clams.tech/install.sh | shRun the guided set up:

clams initInstall the agent skill globally so any Opencode project picks it up:

clams skills install --globalThen grab Opencode Desktop from opencode.ai/download. Pick the build for your OS and finish the installer before opening Venice in the next step.

Generate a Venice API Key

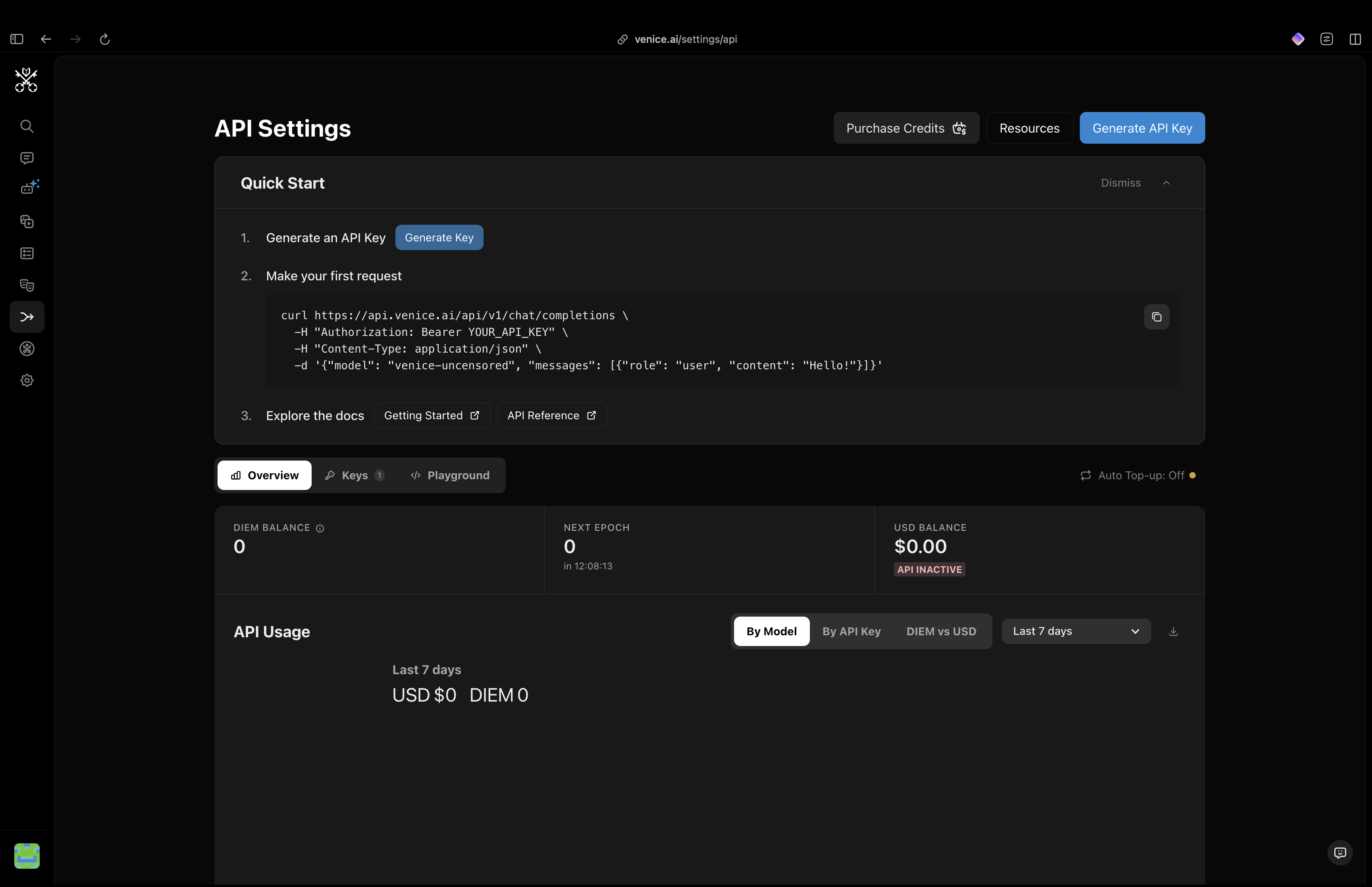

Log into your Venice account and open the API tab from the left side menu. You'll land on the API Settings screen where you can purchase credits, check usage, and manage keys. Click Generate API Key in the top-right.

Venice API Settings. Click Generate API Key in the top right.

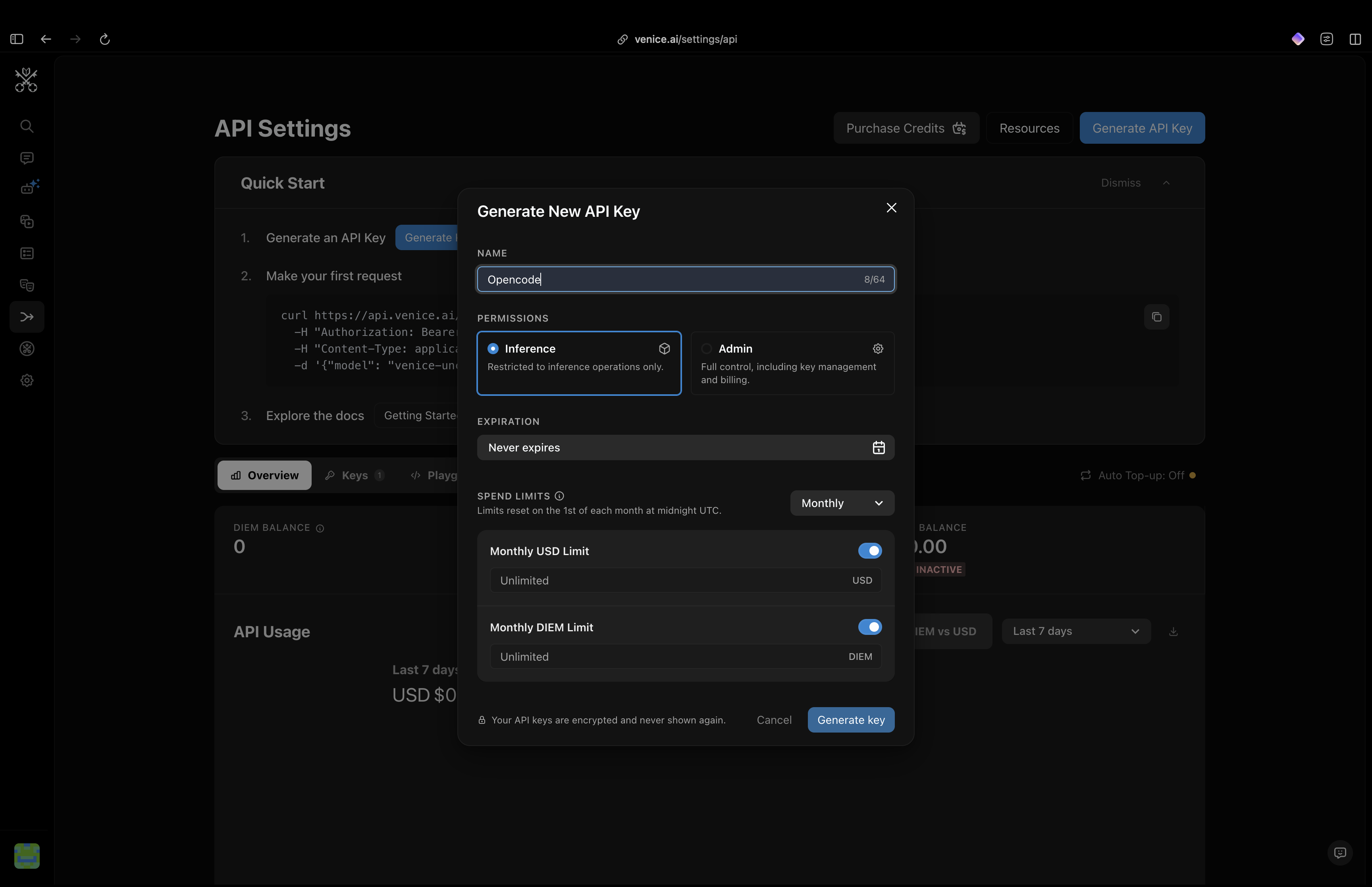

In the modal that opens, give the key a name. We're using it from Opencode, so label it Opencode to keep things obvious if you generate keys for other clients later. Leave Inference selected for permissions, leave the expiration and spend limits at defaults, then click Generate key.

Name the key "Opencode" and leave the defaults in place.

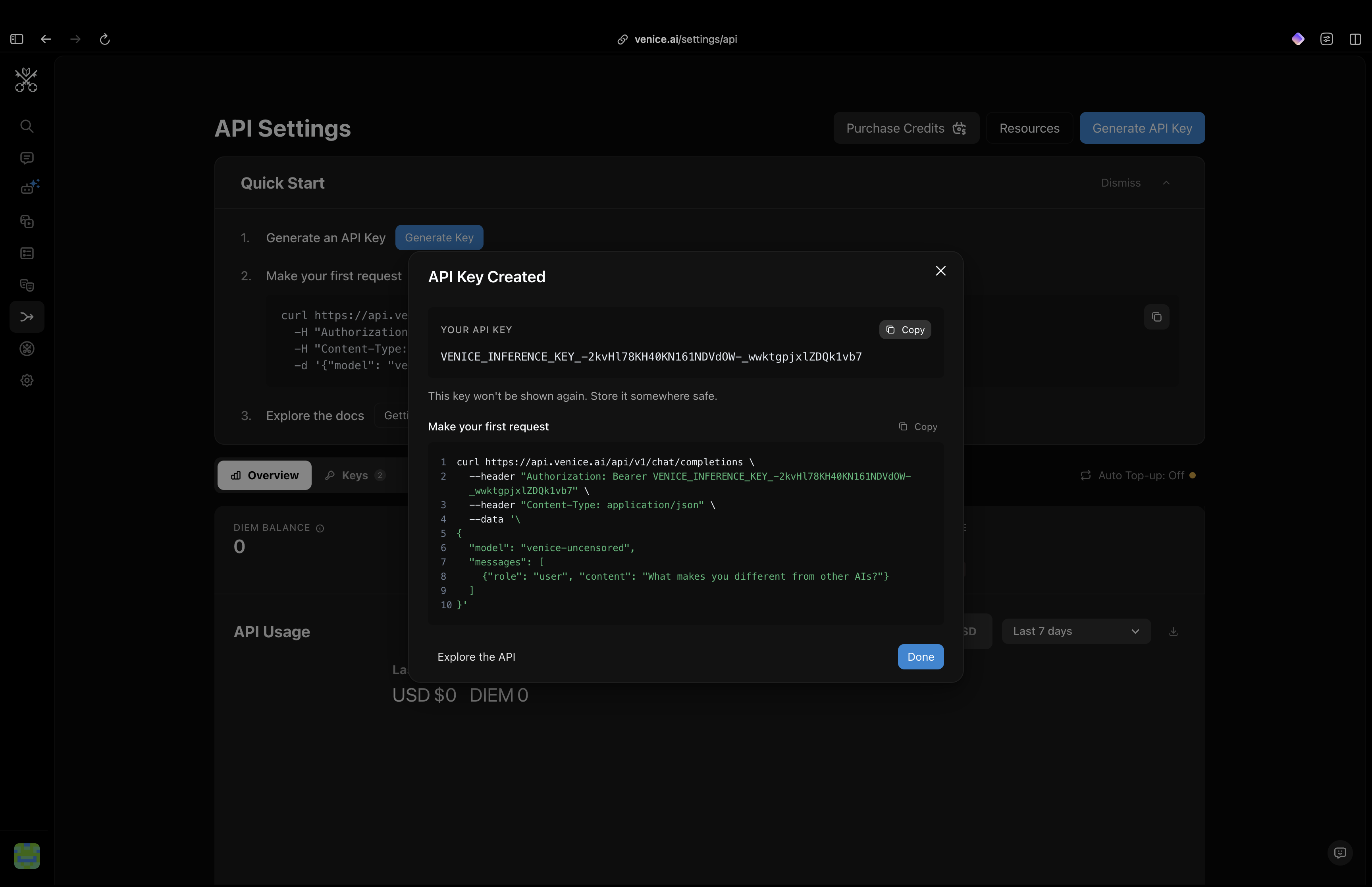

Venice will show the key exactly once. Copy it and store it somewhere safe. A password manager works fine. If you lose it, you'll need to generate a fresh one.

The key is shown once. Copy it before closing the modal.

Connect Venice to Opencode Desktop

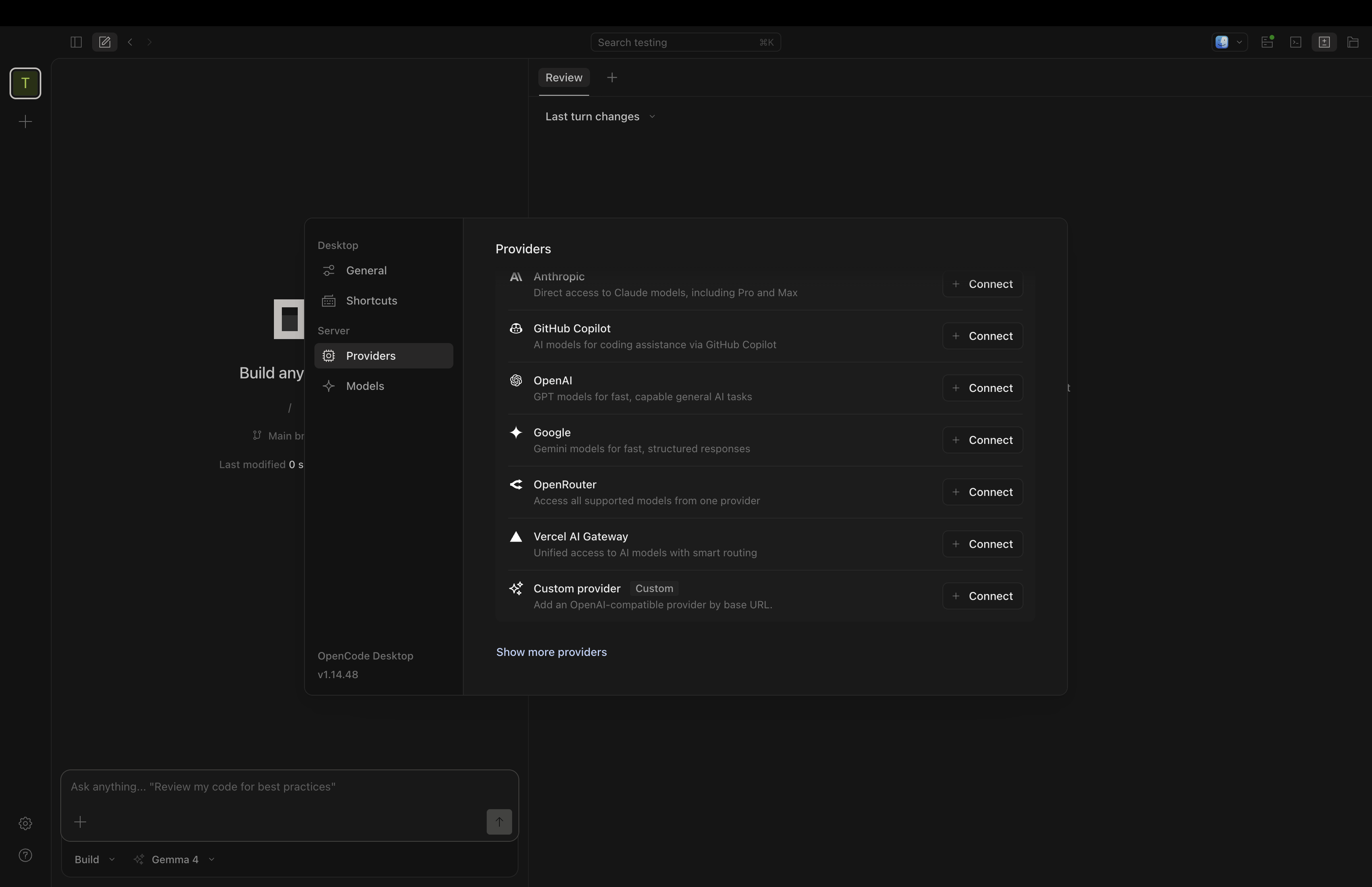

Open Opencode Desktop and click the gear icon at the bottom left to open Settings. Choose Providers in the sidebar. You'll see the built-in list (Anthropic, OpenAI, Google, OpenRouter, and so on). Scroll to the bottom and click Show more providers.

Settings → Providers → Show more providers.

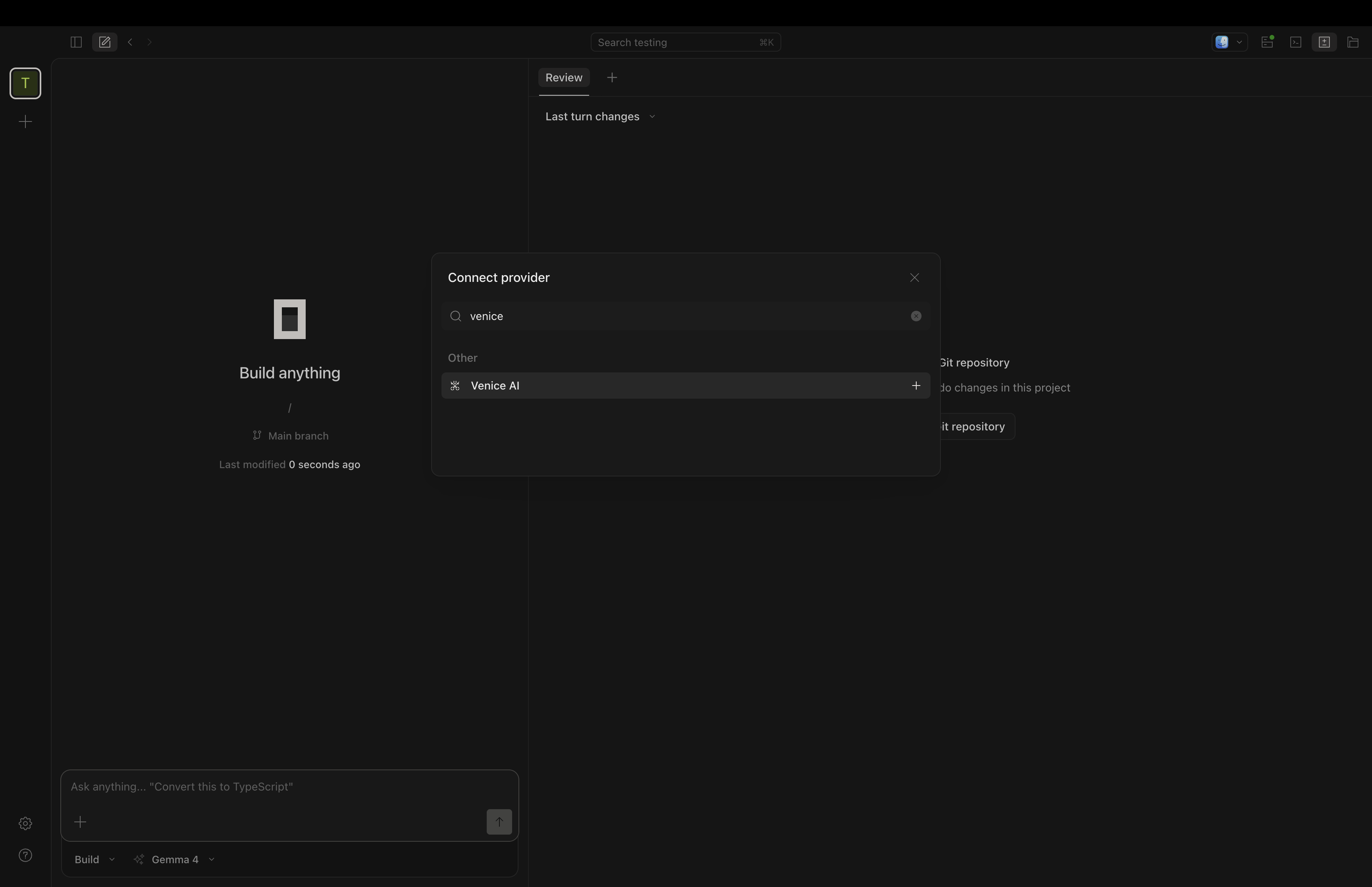

Type venice into the search bar at the top of the Connect provider dialog. Venice AI will appear under Other. Click the row to select it.

Search for "venice" and click the Venice AI row.

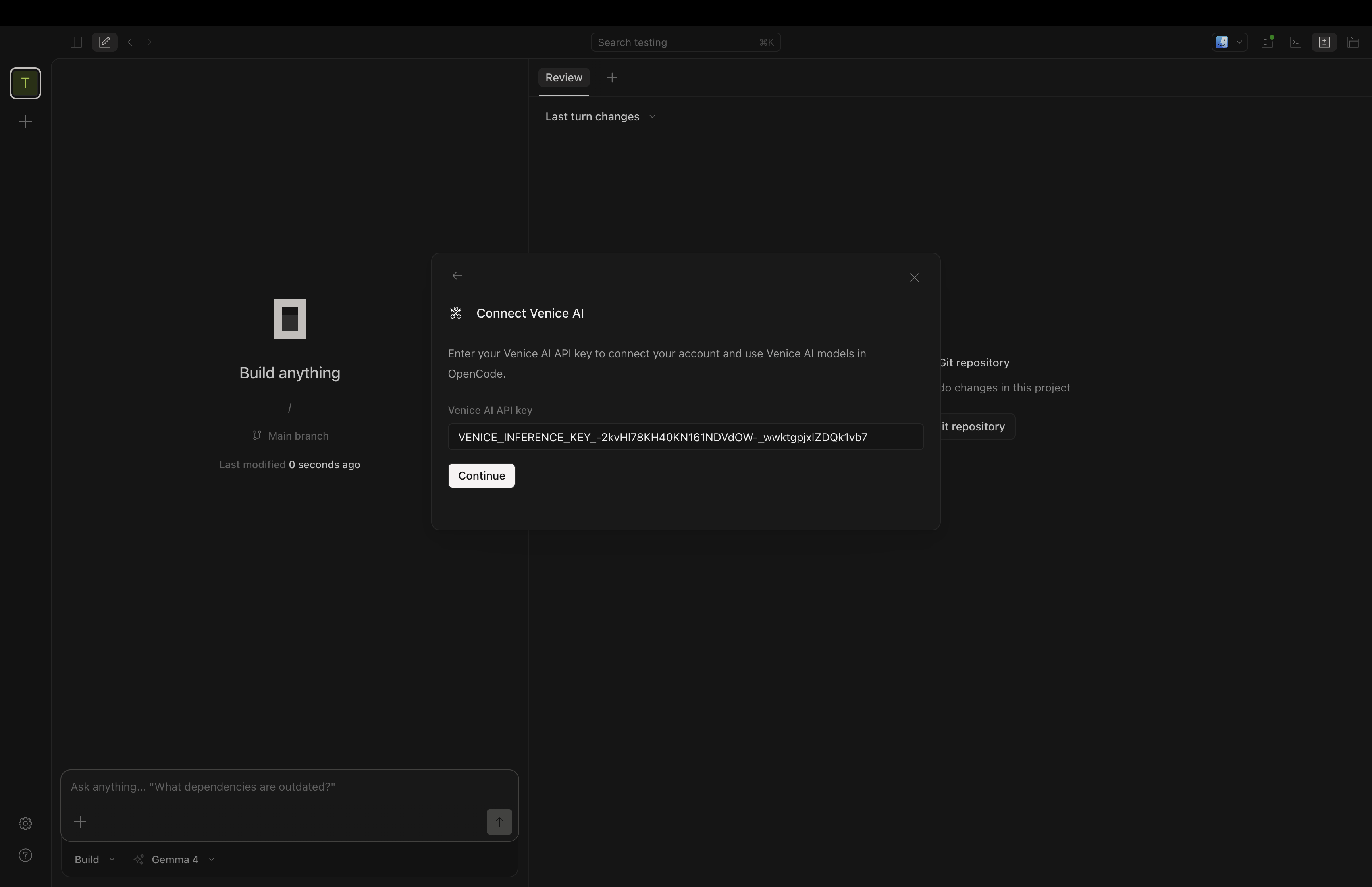

Paste the API key you copied earlier into the Venice AI API key field and click Continue. Opencode validates the key against Venice and lists Venice as a connected provider.

Paste the Venice API key and click Continue.

Choose a Venice Model

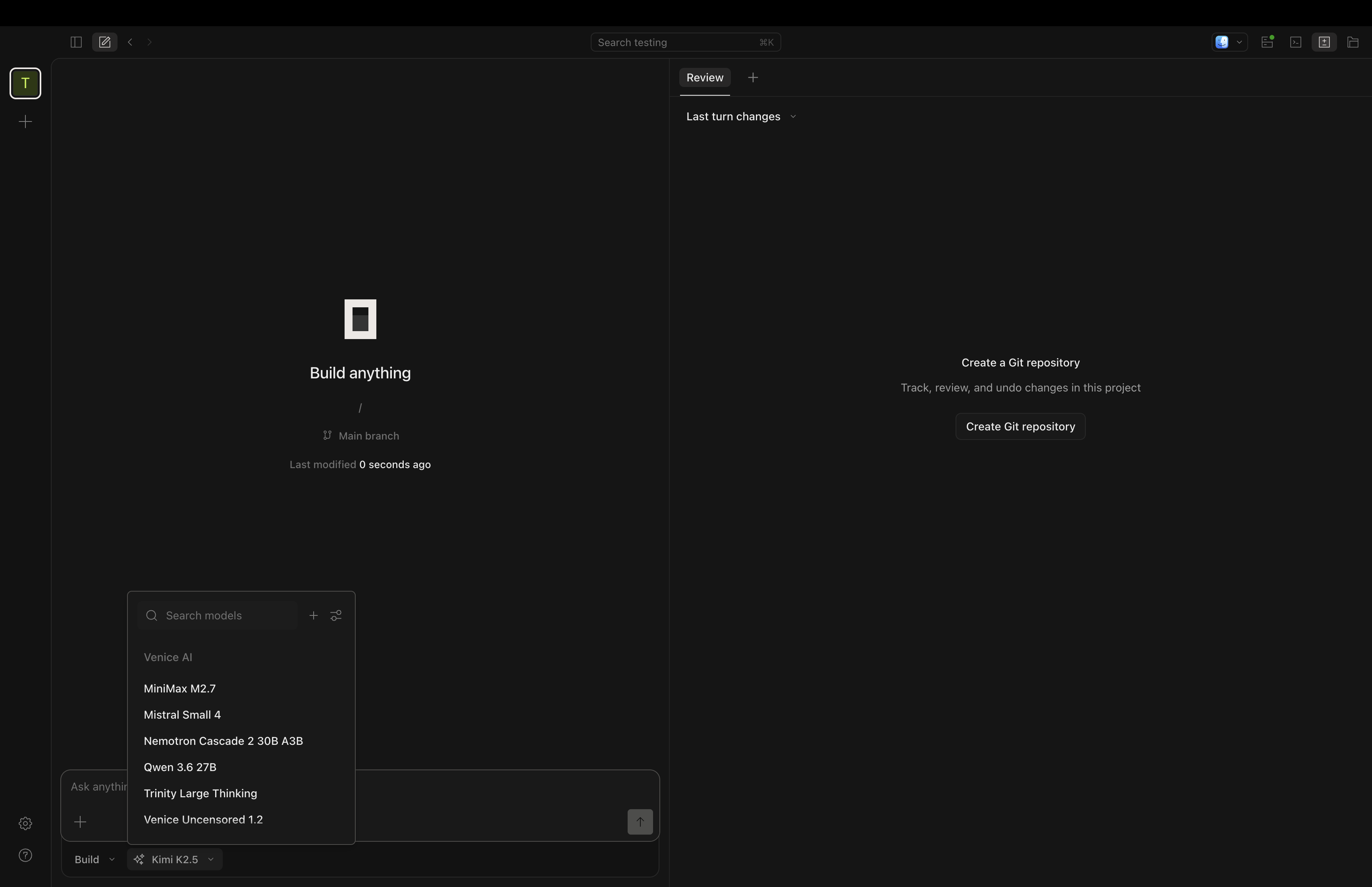

Back on the main Opencode screen, the model picker sits next to the chat input. Click it to open the model list. Your Venice models now appear grouped under Venice AI. Pick the one you want as default.

Select a Venice model. Mistral Small 4 is a solid default for agent work.

A few notes on picking a model:

- Mistral Small 4 is fast, cheap, and handles tool calls reliably. Good default for everyday accounting queries.

- Qwen 3.6 27B trades a little latency for stronger reasoning if you're asking the agent to plan multi-step workflows.

- MiniMax M2.7 and Kimi K2.5 are heavier models for multi-step workflows that need more planning before the agent starts firing CLI calls.

You can switch models per conversation, so start with a small one and escalate when you hit something it stumbles on.

Load the Clams Skill

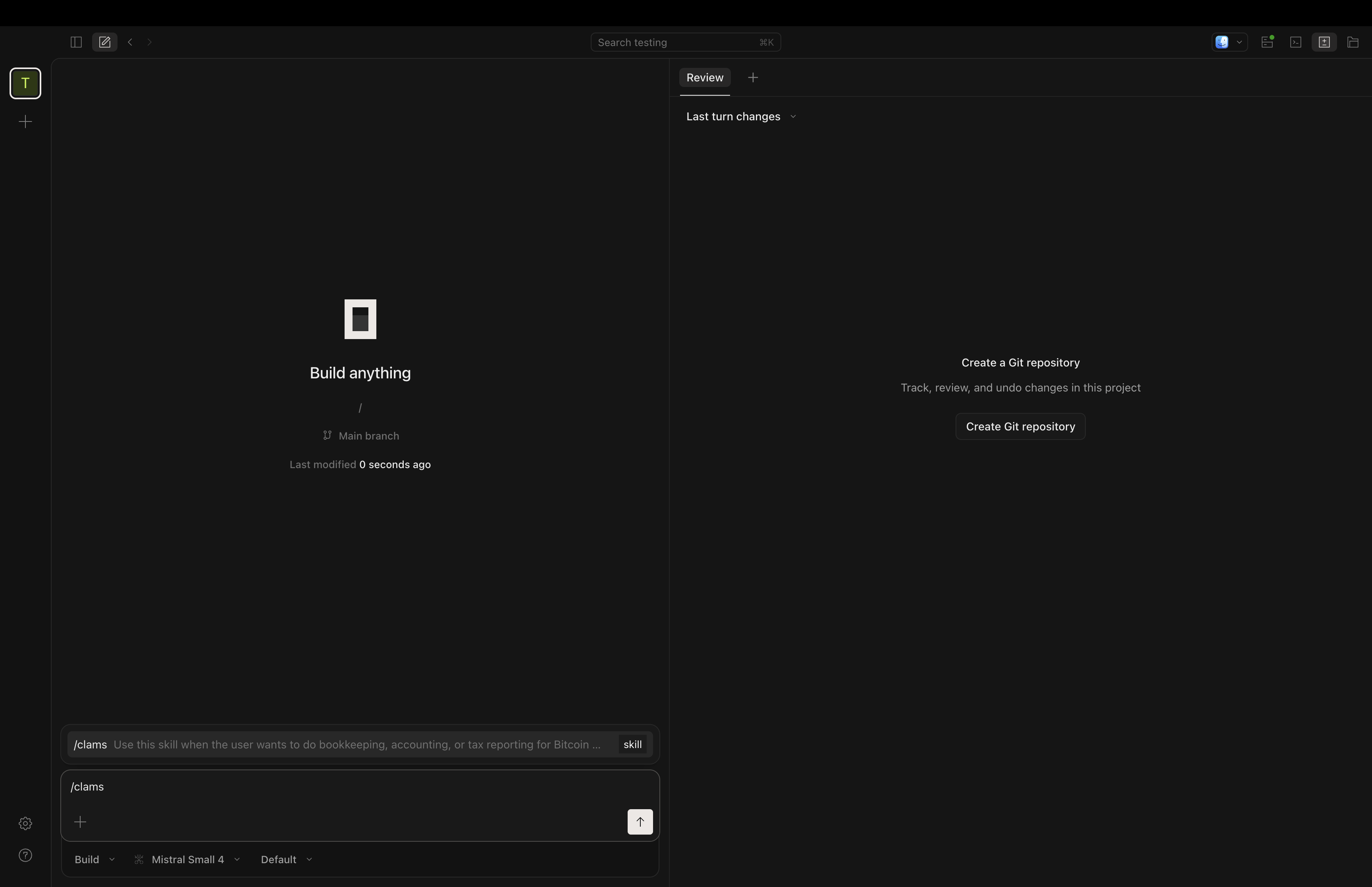

In the chat input, type /clams and hit enter. Opencode shows the matching skill in the suggestion strip above the input. Confirm clams and submit. The agent now has the full Clams skill loaded: every CLI command, the prerequisite checks, the expected report flags, the connection types Clams supports.

Type /clams to load the agent skill for Bitcoin accounting.

In our skill evals, agents with the skill loaded pass 100% of 37 assertions across common workflows, against 67% without it. That's the difference between an agent that guesses at flag names and one that runs the right command on the first try.

Talk to Your Books

You're set. Try something like:

- "Add a new xpub connection labelled cold-storage. Here is the xpub: [pasted xpub]"

- "What's my total balance across all wallets?"

- "Show me Lightning transactions from my LND node this month."

- "Generate a Q1 2026 capital gains report as a PDF."

- "What's the cost basis on this transaction? [pasted txid]"

The Clams CLI runs locally against your wallet data. Venice handles the natural-language layer without retaining your prompts or training on them. Your accountant gets the numbers. Your books never leave your control.

Private Inference, Local Books

Venice's no-logging stance means your prompts about wallets, balances, and tax events don't pile up in a vendor's training set. Clams' local-first model means the underlying data never leaves your machine to begin with. Together, they give you a Venice AI Bitcoin accounting setup without the usual privacy tradeoff.

For an alternative private-AI stack that runs prompts through hardware-isolated enclaves on a local proxy, see our Maple AI setup guide.

Any feedback or issues getting set up, email us at support@clams.tech.

Clams Team